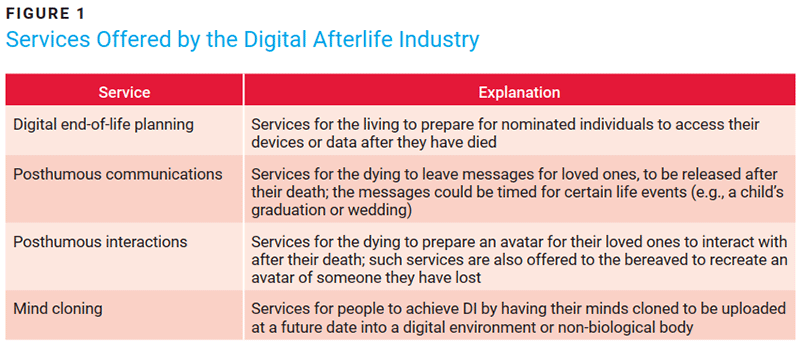

The digital afterlife (DA) industry refers to innovation associated with death and bereavement. This includes enterprises offering digital end-of-life planning and posthumous communication or interaction capabilities (figure 1). Other services overlap biotechnological efforts, such as mind cloning or brain emulation,1 to realize ambitions of digital immortality (DI).2

Microsoft researchers first coined DI in 2000 as a “continuum from enduring fame at one end to endless experience and learning at the other.”3 It was inspired by memory extension innovations that were first conceived toward the end of the Second World War.4 More recently, artificial intelligence (AI) advances have served as key drivers for enterprises investing in DA technology (colloquially termed grief tech).5 These services may have originated in North America, but there are enterprises promoting similar ideas in China6 and South Korea.7 Similar technology has also been utilized in India and South America for election campaigns and to bring awareness to issues such as domestic abuse.8

DA is a fast-developing area of innovation. The technology engages sensitive matters of human life, and as such, it raises important considerations, particularly in the realm of ethics and emerging technologies. Everyone leaves a digital footprint9 on enterprise infrastructure that remains even after death. While this is an uncommon area of exploration for the IT/IS profession, it requires further contemplation. There are also matters of governance introduced that extend well beyond familiar topics, such as privacy.

The Digitization of the Deceased Using AI

The evolution of the DA industry reflects the progression of AI. Earlier enterprises that innovated within the industry concentrated first on voice messaging and chatbots,10 then graphically animated photographs.11 Now utilizing metaverse-related technologies,12 interactive avatars resembling human digital twins—or perhaps digital ghosts would be more accurate—are entering the marketplace.13 The providers’ rationale is that these avatars enable the continuation of bonds with loved ones and maintain memories for future generations, but the technology can also be used for entertainment purposes, such as resurrecting or age-reversing celebrities.14 Problematic and distressing uses also persist, such as the creation of deepfakes of deceased children, including teenage suicides and murder victims. Ofcom, the United Kingdom’s online regulator, has warned that service providers permitting such uses may be in breach of their obligations under the UK Online Safety Act.15

Collectively, the digitization16 and datafication17 of humans seem more akin to a network18 rather than a population. This may have significant implications, ranging from the manipulation of vulnerable people to possible compromise of the integrity of the human species, if associated biotechnological efforts materialize. Therefore, beyond what is already incorporated into environmental, social, and governance (ESG) efforts today via human rights (such as diversity, supply chains, and modern slavery),19 the question arises: Do other sensitivities concerning the human behind the data need to be further entrenched into enterprise governance? In other words, and specifically regarding the DA industry, do the humans that these enterprises potentially interact with (i.e., the dying and grieving) need protecting?

The data that remains when someone dies, sometimes referred to as “digital remains”20 or a digital footprint, does not become redundant upon someone’s death. Similar to a corpse, there are parts of the deceased’s digital footprint that may be valuable for research and heritage, but also for sentimental reasons that might further support the DA industry. However, it has not always been easy for those grieving to access the digital footprint of their loved ones.

Since 2005, there have been various examples of the bereaved attempting to gain access to digital remains belonging to, or about, their loved ones via the courts. These attempts have not always been successful.21 Such access requests have centered on emails, photos, and social media accounts. This implies that digital remains are a form of assets: They are valuable to both enterprises as well as the bereaved, albeit for different reasons.22

The terms and conditions that users agree to when they are alive allow enterprises to assume ownership of digital footprints and deny access to the bereaved, as the contract was made with the deceased, not their heirs or loved ones. Some enterprises, particularly social media platforms, have introduced concepts such as a legacy contact to make access easier for delegated persons, or implemented policies for deleting data after prolonged inactivity.23 Lawmakers in both Europe and North America have historically focused on the financial value of these assets.24 This encourages the posthumous commodification of digital footprints, essentially turning the personality of the deceased into a marketable asset.25

This implies that digital remains are a form of assets: They are valuable to both enterprises as well as the bereaved, albeit for different reasons.The idea of digitizing and commodifying humans has been brought to the surface due to the current framing of AI systems. The reductive perspective of humans as cybernetic thinking beings26 capable of being replicated by machine learning (ML) algorithms and models does not encapsulate aspects of human rights (e.g., freedom and security, equality before the law, and the prohibition of discrimination). While efforts are being made to incorporate human rights within AI regulatory frameworks globally,27 for example, by eliminating negative algorithmic biases, those protections focus on the living, and limited attention has been given to rights, such as dignity and respect, that may be owed to the dead. A specific right concerns privacy, which the deceased do not benefit from.

Postmortem Privacy

The EU General Data Protection Regulation (GDPR) (Recital 27) explicitly states that it does not apply to the personal data of the deceased, though EU member states may provide national rules.28 Outside of the GDPR, there are some protections regarding medical records, for example.29 However, academic studies suggest that this approach does not reflect the opinions of users who see value in their privacy being maintained after their death.30

To address this gap, some legal academics have argued for postmortem privacy (PMP): “the right to privacy and data protection post-mortem” or more broadly, “the right of the deceased to control their personality rights and digital remains post-mortem.”31 Presently, there is little appetite amongst lawmakers to legislate PMP (some have investigated PMP, not ruling it out, but recommending further study).32 Greater priority is given to issues such as online harm and AI risk. It has thus fallen to enterprises to introduce self-regulatory policies reactively after legal challenges, as exemplified by the introduction of legacy contacts. As more people leave growing quantities of digital remains, it will be prudent for IS/IT professionals to proactively consider the impact this could have within their enterprise environments. For example, digital remains do not solely consist of data on social media platforms, as users are often both content creators and consumers. There are also digital remains within the infrastructure of retailers, insurance providers, and genealogy services, for example. The potential further use of digital remains within these enterprises is particularly concerning as AI tools can make inferences from digital remains that apply to living persons without their knowledge.33 While some digital remains must be retained for legal reasons, IS/IT professionals should consider ethical, and perhaps moral, implications of using digital remains when one is no longer able to consent to such use.

Deceased Productive Colleagues

Another aspect of the DA which may concern IS/IT professionals is its overlap with a growing trend in developing synthetic humans or AI companions based on real or generated people. Recent examples include Replika, Character.AI, and Meta AI. OpenAI has proposed AI agents as virtual employees,34 but these capabilities could lead to other prospective technologies affecting the profession, such as the emulation of deceased workers created from data captured in the workplace alongside their personal digital remains.

As more people leave growing quantities of digital remains, it will be prudent for IS/IT professionals to proactively consider the impact this could have within their enterprise environments.The mechanisms of producing “dead labor”35 are not new. In 2023, US screen actors went on strike regarding production companies’ use of AI tools to reanimate, or replace, actors based on captured data.36 Similarly, two years after the death of a professor, an online course at Concordia University (Montreal) was found to be posting lecture videos that had been pre-recorded by the deceased.37

Online and remote teaching predominated during the COVID-19 pandemic, as did the use of remote communication services across many other industries, often creating large repositories of recorded interaction data. Ownership and reuse of this data are partially addressed via intellectual property and employment law. However, returning to the notion of ESG, these legal approaches may not be fully cognizant of sociocultural considerations around new technologies, such as ethical exploitation of legal rights and protection of dignity, which may need to be incorporated into risk management and cybersecurity practices. Further, a precautionary approach to human data reuse might be required as resulting recreations become more sophisticated through technological advances. It may also be necessary to address scenarios where they appear to be self-aware or demonstrate levels of consciousness; for example, by considering the concept of avatar rights.38

Developments such as the possibility of employees interacting with digital recreations of deceased colleagues suggest that the range of people who could be impacted will not just be the dying or grieving (in the context of bereavement) but may expand to almost everyone across a broad range of life contexts. There appears to be little consideration of, or preparation for, this possibility. Further, there are wider unresolved psychological matters regarding interactions with such creations, with some having tragic consequences.39 However, these may contradict PMP, as the protection of the living should be prioritized.

Harming the Deceased or the Living?

Calls for responsible innovation40 have permeated the discourse on AI risk and regulation. However, as technology encroaches on wider aspects of life and death, both the scope of responsibility and the range of engaged stakeholders may also need to widen. This may prove unpopular with technology innovators who lack professional experience or support when addressing social complexities regarding issues such as vulnerable users.

Similar to enterprises, a person’s legacy is at risk of reputational damage.While the onus regarding establishing appropriate forms of governance within the DA industry appears to be on enterprises to self-govern, many innovators shun or deny responsibility over the uses of their products or their users.41 Therefore, if DA becomes mainstream, it may fall to the IS/IT profession to steer governance. This could involve collaborating with ethicists to address complex issues such as the rights and protections owed to the deceased. Can they, for instance, be classed as vulnerable in the context of harms that might arise from the DA industry?

There are legal and philosophical debates on whether the dead can be harmed.42 Similar to enterprises, a person’s legacy is at risk of reputational damage. This prospect, for some users, is sufficient to engage an interest in knowing what happens to their data after their death, and a wish to have autonomy over its future utilization, including rights to prevent reuse or demand deletion.43 Yet while the UN Universal Declaration of Human Rights identifies autonomy and dignity as foundational concepts of human rights,44 they are often only weakly reflected in enterprise ESG strategies.

The DA industry overlaps with biotechnological efforts. Should these efforts be successful, beliefs regarding the harm and autonomy of the deceased may need reimagining if indeed someone is digitally resurrected. Alternatively, one may be inclined to believe that a fully functioning mind clone of the deceased in a possible future is a separate entity that should be afforded something akin to legal personhood—or may require new rules.45 A further alternative view is if these entities are essentially software and algorithms, how effectively can current IS/IT standards and practices manage their corresponding risk?

Conclusion

Technologies that can digitally recreate the voice or image of someone who is deceased have been present for almost a decade and have been used primarily for entertainment or sentimental reasons. However, there are enterprises harnessing similar technologies specifically for the dying and those grieving, and their proliferation has correlated with AI advancements.

Such innovation has materialized within both social and enterprise settings. These technological efforts provide food for thought and anticipate happenings that may require reimagining risk management mechanisms, which also bring new concerns to contemplate. While not all enterprises will form part of the DA industry, it is likely that the digital footprints of the deceased will feature within their infrastructure in various ways and forms. As a profession, IS/IT personnel must consider how these are managed. This requires sensitivity and expansion of the reach of established ESG policies to incorporate more consideration for human rights.

Endnotes

1 Kurzweil, R.; The Singularity is Near: When Humans Transcend Biology, Penguin Books, USA, 2006

2 Bell, G.; Gray, J.; “Digital Immortality,” Communications of the ACM, vol. 44, iss. 3, 2001, p. 28-31

3 Bell; Gray; “Digital Immortality”

4 Bush, V.; “As We May Think,” The Atlantic Monthly, vol. 76, iss. 1, 1945, p. 101-108

5 Ramirez, V.B.; “Grief Tech Uses AI to Give You (and Your Loved Ones) Digital Immortality,” SingularityHub, 16 August 2023

6 Zhou, V.; “AI “Deathbots” Are Helping People in China Grieve,” Rest of World, 17 April 2024

7 Hayden, S.; “Mother Meets Recreation of Her Deceased Child in VR,” Road to VR, 7 February 2020

8 Dutt, B.; “Indian Politicians Are Bringing the Dead on the Campaign Trail, With Help From AI,” Rest of World, 6 May 2024; Partnership on AI, How AI Video Company D-ID Received Consent to Digitally Resurrect Victims of Domestic Violence

9 Rawindaran, N.; Bentotahewa, V.; Death Becomes Data, in Data Protection: The Wake of AI and Machine Learning, Hewage, C.; Yasakethu, L.; et al.; Springer Nature Switzerland, USA, 2024, p. 29-45

10 Henrickson, L.; “Chatting With the Dead: The Hermeneutics of Thanabots,” Media, Culture & Society, vol. 45, iss. 5, 2023, p. 949-966

11 Kidd, J.; Nieto Mcavoy, E.; “Deep Nostalgia: Remediated Memory, Algorithmic Nostalgia, and Technological Ambivalence,” Convergence The International Journal of Research into New Media Technologies, vol. 29, iss. 3, 2023

12 KPMG, Future of Extended Reality, 2022; McKinsey & Company, Value Creation in the Metaverse, 2022

13 Morris, M.R.; Brubaker, J.R.; “Generative Ghosts: Anticipating Benefits and Risks of AI Afterlives,” ArXiv, 12 December 2024

14 Tzanidis, T.; Langston, S.; “Abba and Tupac in the Metaverse: How Digital Avatars Could be the Bankable Future of Band Touring,” The Conversation, 14 April 2022

15 Ofcom, “Open Letter to UK Online Service Providers Regarding Generative AI and Chatbots,” 8 November 2024

16 Baudrillard, J.; Passwords, Verso, USA, 2003

17 Bassett, D.J.; “Who Wants to Live Forever?: Living, Dying and Grieving in our Digital Society,” Social Sciences, vol. 4, iss. 4, 2015, p. 1127-1139

18 Bollmer, G.; Inhuman Networks: Social Media and the Archaeology of Connection, Bloomsbury, 2018

19 Simmons & Simmons, “Human Rights Due Diligence as Part of 'Social' in ESG,” 8 June 2021

20 Harbinja, E.; Digital Death, Digital Assets and Post-Mortem Privacy, Edinburgh University Press, United Kingdom, 2022

21 Harbinja; Digital Death

22 Harbinja; Digital Death

23 Harbinja; Digital Death; Brubaker, J.R.; Callison-Burch, V.; “Legacy Contact: Designing and Implementing Post-Mortem Stewardship at Facebook,” in Proceedings of the 2016 CHI Conference on Human Factors in Computing Systems, Association for Computing Machinery, USA, 2016, p. 2908–2919

24 Harbinja; Digital Death

25 Öhman, C.; The Afterlife of Data: What Happens to Your Information When You Die and Why You Should Care, University of Chicago Press, USA, 2024; Zuboff, S.; The Age of Surveillance Capitalism: The Fight for a Human Future at the New Frontier of Power, Profile Books, United Kingdom, 2019

26 Turing, A.M.; “Computing Machinery and Intelligence,” Mind, vol. 59, iss. 236, 1950, p. 433-60; Wiener, N.; God & Golem, Inc: A Comment on Certain Points Where Cybernetics Impinges on Religion, The MIT Press, USA, 1966

27 Turk, V.; “Artificial Intelligence Must Be Grounded in Human Rights, Says High Commissioner,” United Nations Human Rights Office of the High Commissioner, 12 July 2023; Ministry of Justice and The Rt Hon Shabana Mahmood MP, “UK Signs First International Treaty Addressing Risks of Artificial Intelligence,” United Kingdom, 5 September 2024

28 Harbinja; Digital Death

29 Rawindaran, N.; Bentotahewa, V.; Death Becomes Data; Kohl, U.; “What Post-Mortem Privacy May Teach Us About Privacy,” Computer Law & Security Review, vol. 47, 2022

30 Harbinja, E.; Morse, T.; et al.; Digital Remains and Post-Mortem Privacy in the UK: What Do Users Want?, BILETA 2024 Conference, 27 March 2024; Morse, T.; Birnhack, M.; The Posthumous Privacy Paradox: Privacy Preferences and Behavior Regarding Digital Remains, New Media & Society, vol. 24, iss. 6, 2022, p. 1343-1362; Gamba, F.; “The Right to be Forgotten and Paradoxical Visibility. Privacy, Post-Privacy and Post-Mortem Privacy in the Digital Era,” Problemi dell'informazione, vol. 2, 2020

31 Harbinja; Digital Death

32 Australian Government, Privacy of Deceased Individuals, Australia, 16 August 2010

33 Öhman; The Afterlife of Data

34 Milmo, D.; “‘Virtual Employees’ Could Join Workforce as Soon as This Year, OpenAI Boss Says,” The Guardian, 6 January 2025

35 Öhman; The Afterlife of Data

36 Horton, A.; “Bryan Cranston Leads Actors’ Strike Rally in New York: ‘We Will Not Allow You to Take Away our Dignity,’” The Guardian, 25 July 2023

37 Kneese, T.; “How a Dead Professor Is Teaching a University Art History Class,” Slate, 27 January 2021

38 Cheong, B.C.; “Avatars in the Metaverse: Potential Legal Issues and Remedies,” International Cybersecurity Law Review, vol. 3, iss. 2, 2022, p. 467-494

39 Ofcom, “Open Letter”

40 Owen, R.; Bessant, J.R.; et al.; Responsible Innovation: Managing the Responsible Emergence of Science and Innovation in Society, John Wiley & Sons, USA, 2013

41 Laskor, K.; “Review - ‘Eternal You’: A Documentary About the Digital Afterlife Industry,” Part of Life, 31 July 2024

42 Harbinja; Digital Death; Öhman, C.; The Afterlife of Data

43 Harbinja, E.; Morse, T.; et al.; Digital Remains; Morse, T.; Birnhack, M.; The Posthumous Privacy Paradox; Gamba, F.; “The Right to be Forgotten”

44 United Nations, Universal Declaration of Human Rights, 1948

45 Novelli, C.; Floridi, L.; et al.; AI as Legal Persons: Past, Patterns, and Prospects, 24 November 2024

KHADIZA LASKOR | CISA, CRISC, CDPSE, CISSP

Is a Ph.D. student in the Cyber Security Doctoral Training Programme at the University of Bristol (United Kingdom). She has almost 20 years of IT audit, risk, and compliance experience within the banking industry.